Isolating AI Coding Agents on Bare Metal: Incus, Podman, and a $50/Month Server

Singular runs 4–6 client projects at any given time. Healthcare platforms. Financial systems. Each project needs its own runtimes (JDK, Node, Python, Rust, often a mix), its own databases, its own search engine, its own container runtime for integration tests. And increasingly, each project has AI coding agents running autonomously inside it, grinding through tasks overnight while we sleep.

That is a lot of moving parts to keep isolated on a single machine.

We’re a small team. Two-to-three person strike teams per engagement. We don’t have the luxury of one-project-per-engineer. Everyone juggles multiple codebases. And when you add autonomous agents to the mix, agents that install packages, run builds, execute tests, and modify code without asking, the question becomes: how do you keep all of this organized, isolated, and recoverable without buying everyone a $4,000 workstation?

We built sing to answer that. It’s a CLI that provisions bare-metal servers, creates fully isolated development environments, and manages the lifecycle of agentic development workflows. This post covers the infrastructure decisions behind it and the engineering problems we solved along the way.

The Economics: Bare Metal Over Mac Minis

Before the architecture, the math.

A Mac Mini M4 Pro configured for serious multi-project development runs $1,500–$2,000 per engineer. A Mac Studio with an Ultra chip starts at $4,000. And you still get macOS: Docker Desktop for containers, Rosetta translation for Linux images, no system-level isolation primitives.

A Hetzner dedicated server with 8 cores, 64GB RAM, and a terabyte of NVMe storage costs about $50/month. Real Linux. Real isolation primitives. Enough horsepower to run 5–6 fully isolated project environments simultaneously, each with its own databases, runtimes, and AI agents.

Every Singular engineer gets their own server. Project configurations are shared through sing. Pull the config, run one command, and you have an environment identical to everyone else’s. The machine is defined in YAML and provisioned deterministically.

$50/month per engineer. Not $4,000 up front.

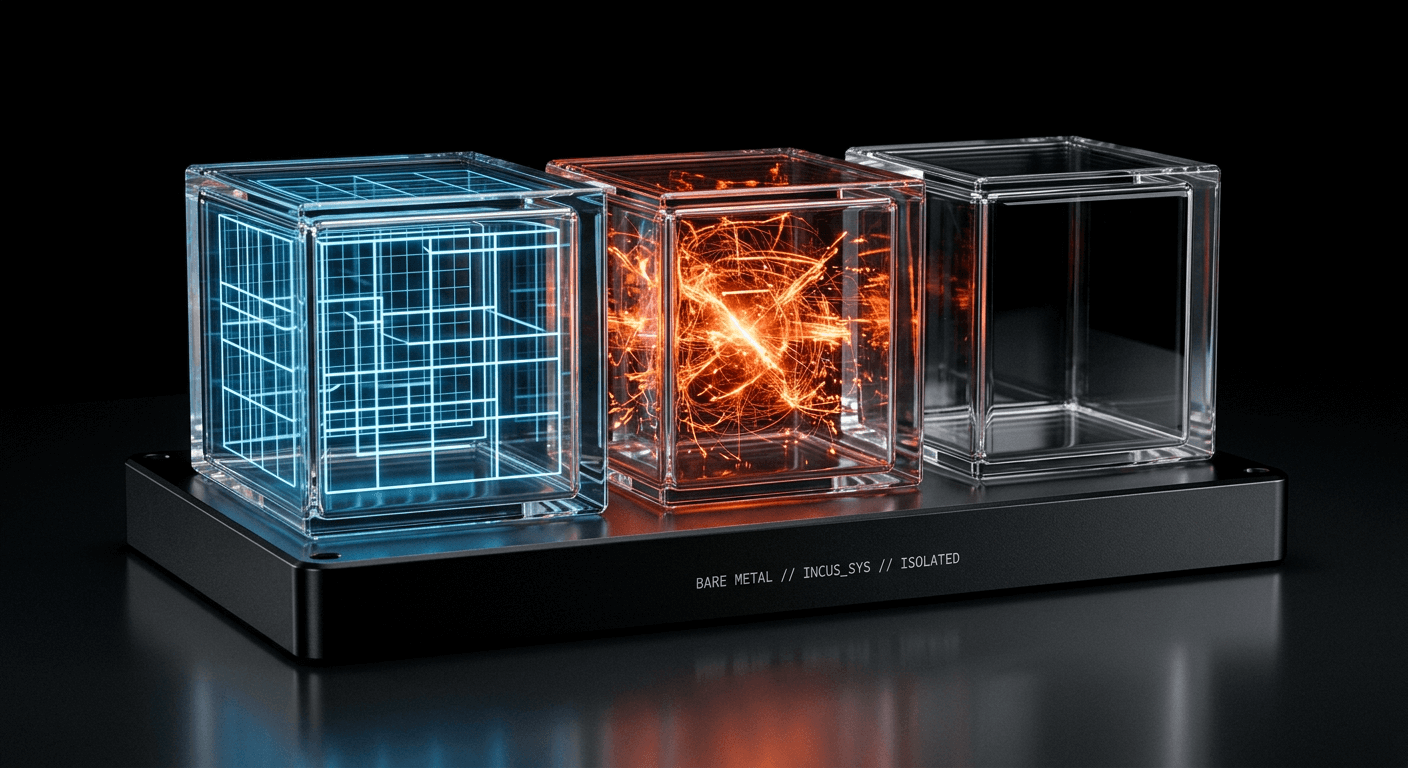

System Containers, Not Application Containers

The foundation of sing is Incus, a system container manager and the community fork of Canonical’s LXD.

The key word is system containers. Most engineers think of containers as Docker-style application containers: a single process with namespace isolation and cgroups. System containers are a different thing entirely. Each one is a full Linux userspace with its own filesystem, its own network interface, its own systemd, its own init. From inside, it looks and feels like a dedicated machine. From the host, it’s lightweight. No hypervisor. A fraction of the memory cost of a VM.

Every project environment in sing is an Incus system container with cgroup-enforced CPU and memory limits. An agent burning 4 cores in one project is hard-capped by the cgroup. The other projects don’t feel it. The filesystems are completely separate. There’s no shared runtime, no shared socket, no shared anything between projects.

We pair this with two storage backends. The default is dir, backed by the host filesystem, works on any server with zero setup. For hard disk quotas, ZFS is opt-in: Incus sets a refquota on the ZFS dataset, and the kernel enforces it.

One command provisions the host:

sudo sing host initThat installs Incus, creates the storage pool, configures networking and firewall bridge rules, and caches the base Ubuntu 24.04 image. Run it once. The server is ready.

Rootless Podman Inside System Containers

Inside each Incus container, infrastructure services (databases, search engines, message brokers) run as rootless Podman containers.

Why Podman over Docker? Podman is daemonless. Each user runs their own container runtime in their own user namespace. There is no central daemon, no privileged root process managing containers, no shared socket. Services start with podman run -d --restart=always, and loginctl enable-linger keeps them alive across container reboots. Each project’s services are completely invisible to every other project.

Getting this working reliably inside Incus system containers was the first real engineering challenge. Ubuntu 24.04 ships with apparmor_restrict_unprivileged_userns=1, which blocks the user namespaces that rootless Podman depends on. The fix is two Incus config flags: security.nesting=true and raw.lxc=lxc.apparmor.profile=unconfined, followed by a container restart. Two lines of config, once you know them. Finding them cost us an hour of debugging AppArmor denials in kernel logs.

Taming Testcontainers

If your stack includes Java, you’re probably using Testcontainers for integration tests. Testcontainers spins up databases and services in containers for each test run, then tears them down when the tests finish. In theory.

In practice, containers linger. The JVM crashes mid-test. The reaper process (Ryuk) doesn’t fire. An AI agent running your test suite gets killed before cleanup happens. These orphaned containers pile up silently, holding ports and consuming memory, until someone’s test suite starts failing because port 5432 is already taken by a zombie Postgres from three runs ago.

Our architecture eliminates this class of problem. Each project has its own Incus system container with its own rootless Podman runtime. Orphaned Testcontainers are scoped to that project’s Podman, invisible to every other project. And if things get truly out of hand, you destroy the Incus container and recreate it from the YAML config. Every orphan, every zombie, every bit of accumulated state: gone. Ninety seconds later, you’re back to a clean environment.

We disable Ryuk entirely (TESTCONTAINERS_RYUK_DISABLED=true) and let the environment boundary be the cleanup mechanism. Simpler, more reliable, and it eliminates a reaper process that was itself a frequent source of orphaned containers.

Declarative Config, Disposable Environments

A project is defined by a single YAML file:

name: acme-health

resources:

cpu: 4

memory: 12GB

disk: 150GB

runtimes:

jdk: 25

node: 22

services:

postgres:

image: postgres:16

ports: [5432]

environment:

POSTGRES_DB: acme

POSTGRES_USER: dev

POSTGRES_PASSWORD: dev

agent:

type: claude-code

auto_snapshot: trueThe config is the source of truth. The container is derived state. Destroy it and recreate from the same file. Every operation is idempotent.

This design pays off most when things go wrong. Overnight agent runs go sideways sometimes. When they do, you don’t spend the morning debugging a corrupted environment. You snapshot before the run, check the results when you wake up, and if the agent made a mess, roll back. If the environment is somehow unsalvageable, destroy and recreate from YAML. The recovery path is always “rebuild from config,” and it always works because the config is the only thing that matters.

Project configs live in a shared repository. sing fetches a descriptor, deep-merges shared defaults with project-specific overrides, prompts for per-developer values (name, email, SSH key), and writes a ready-to-use config. Every engineer on the same project gets the same environment because it’s derived from the same source.

Building It in Java 25 + GraalVM

We built sing in Java 25 and compile it to a native binary with GraalVM. Java for a CLI raises eyebrows, but hear us out.

We’ve been writing Java for nearly two decades. The language has changed more in the last five years than in the previous fifteen. Records for value types. Sealed interfaces for domain modeling. Pattern matching. Virtual threads for I/O. Text blocks for the YAML and shell scripts we generate. It’s a different language than what most people remember.

GraalVM’s native-image compiler turns the whole thing into a static binary with sub-millisecond startup. No JVM, no classpath. The entire dependency footprint is two libraries: picocli for command parsing and SnakeYAML Engine for YAML. No framework, no reflection metadata, no annotation magic. Our data records use explicit factory methods instead of reflection-based deserialization, which means zero native-image configuration beyond what picocli generates automatically at compile time.

The result: a single binary you install with curl | bash. No runtime dependencies. No containers to run the tool that manages your containers.

Agent Sandboxing

There is no AI in sing. No LLM calls. No “AI-powered” anything. The tool is deterministic infrastructure.

The agents (Claude Code, Codex, Gemini, or whatever comes next) run inside the sandboxes that sing creates. They’re guests, not hosts. An agent gets full access to its project’s code, runtimes, and services, and zero access to anything outside the container. It can install packages, run builds, start services, execute tests, all within its sandbox. It cannot reach another project’s database, read another project’s source code, or affect another project’s agent session.

Before a headless agent run, sing snapshots the container. Guardrails monitor wall-clock time, idle periods, and commit frequency from the host, outside the agent’s reach. If the agent spirals, the watcher stops the session and rolls back. The next morning, a report aggregates git activity, task completion, and guardrail triggers into a single summary. You know exactly what happened before you open the code.

A Throughput Multiplier

We’ve been running sing across all client engagements since January. The impact has been straightforward: less time fighting infrastructure, more time shipping.

An engineer switches between two active projects in the morning. Both environments start in seconds, services come up automatically, the agent from last night’s run left a clean summary. A new team member joins a project, pulls the config, creates the environment, connects via Zed remote dev, and is writing code the same day. An overnight agent run goes sideways. Roll back the snapshot, review what happened, rerun.

None of this is revolutionary. It’s plumbing. The kind that compounds. When you remove the friction of environment setup, project switching, agent cleanup, and state management, the team moves faster on the work that actually matters.

One binary. Zero dependencies. Fully declarative. Has been a real throughput multiplier for us.

singis internal tooling today. If there’s enough interest, we’ll open-source it.